|

1 | | -# Some Notes on Complexity |

| 1 | +# Complexity |

| 2 | + |

| 3 | +## What makes a program "better"? |

| 4 | + |

| 5 | +There are multiple ways of measuring if a program is "better" than an other, assuming they perform similar functions: |

| 6 | + |

| 7 | +- Correctness: a program not producing the correct output is worst than a program that is always correct, |

| 8 | +- Security: a program leaking personal information or infesting your computer will always be worst than one that does not, |

| 9 | +- Memory: the less a program requires memory to be installed and to run efficiently, the better. |

| 10 | +- Time: the faster the program produces the output, the better. |

| 11 | +- Other measures: ergonomics, cost, compatibility with operating systems, licence, network efficiency, may also come into play to decide which program is "best". |

| 12 | + |

| 13 | +Some criteria may be subjective (usability, for example), but some can be approach objectively: in a first approximation, memory (also called "space") and time are the two preferred measures. |

| 14 | + |

| 15 | +## Orders of magnitude in growth rates |

| 16 | + |

| 17 | +### Principles |

| 18 | + |

| 19 | +When measuring space or time, it is important to take the size of the input into account: that a word-processing software can open a document containing 1 word in 0.0001 second is no good if it takes hours to open a 10 pages document. |

| 20 | + |

| 21 | +Hence, algorithms and programs are classified according to their *growth rates*, how "fast" their run time or space requirements grow when the input size grows. |

| 22 | +This growth rate is classified according to ["orders"](https://en.wikipedia.org/wiki/Big_O_notation#Orders_of_common_functions), using the [*Big O notation*](https://en.wikipedia.org/wiki/Big_O_notation), whose definition follows: |

| 23 | + |

| 24 | +Using $|\cdot|$ to denote the absolute value, we write: |

| 25 | + |

| 26 | +$$f(x) = O(g(x))$$ |

| 27 | + |

| 28 | +if there exists positive numbers $n$ and $x_0$ such that |

| 29 | + |

| 30 | +$$|f(x)| \leqslant M |g(x)|$$ |

| 31 | + |

| 32 | +for all $x \geqslant x_0$. |

| 33 | + |

| 34 | +### Common orders of magnitude |

| 35 | + |

| 36 | +Using $c>1$ for "a constant", $n$ for the size of the input and $\log$ for logarithm in base $2$, we have the following common [orders of magnitude](https://en.wikipedia.org/wiki/Big_O_notation#Orders_of_common_functions): |

| 37 | + |

| 38 | +- constant ($O(c)$), |

| 39 | +- logarithmic ($O(\log n)$), |

| 40 | +- linear ($O(n)$), |

| 41 | +- [linearithmic](https://en.wikipedia.org/wiki/Time_complexity#Quasilinear_time) ($O(n \times \log(n)))$), |

| 42 | +- polynomial ($O(n^c)$), which includes |

| 43 | + - quadratic ($O(n^2)$), |

| 44 | + - cubic ($O(n^3)$), |

| 45 | +- exponential ($O(c^n)$), |

| 46 | +- factorial ($O(n!)$). |

| 47 | + |

| 48 | +### Simplifications |

| 49 | + |

| 50 | +In typical usage the $O$ notation is *asymptotical* (we are interested in very large values), which means that only the contribution of the terms that grow "most quickly" is relevant. Hence, we can use the following rules: |

| 51 | + |

| 52 | +- If $f(x)$ is a sum of several terms, if there is one with largest growth rate, it can be kept, and all others omitted. |

| 53 | +- If $f(x)$ is a product of several factors, any constants (factors in the product that do not depend on $x$) can be omitted. |

| 54 | + |

| 55 | +There are additionaly some very useful [properties of the big O notation](https://www.geeksforgeeks.org/dsa/properties-of-asymptotic-notations/): |

| 56 | + |

| 57 | +- Reflexivity: $f(n) = O(f(n))$, |

| 58 | +- Transitivity: $f(n) = O(g(n))$ and $g(n) = O(h(n))$ implies $f(n) = O(h(n))$, |

| 59 | +- Constant factor: $f(n) = O(g(n))$ and $c > 1$ implies $c\times f(n) = O(g(n))$, |

| 60 | +- Sum rule: $f(n) = O(g(n))$ and $h(n) = O(k(n))$ implies $f(n) + h(n) = O(\max (g(n), k(n))$, |

| 61 | +- Product rule: $f(n) = O(g(n))$ and $h(n) = O(k(n))$ implies $f(n) \times h(n) = O(g(n) \times k(n))$, |

| 62 | +- Composition rule: $f(n) = O(g(n))$ and $g(n) = O(h(n))$ implies $f(g(n)) = O(h(n))$. |

| 63 | + |

| 64 | +$$ |

| 65 | +\begin{align*} |

| 66 | +f(n) & = O(f(n)) \tag{Reflexivity}\\ |

| 67 | +f(n) & = O(f(n)) \text{Reflexivity} |

| 68 | +\end{align*} |

| 69 | +$$ |

| 70 | + |

| 71 | +<!-- |

| 72 | + Example 1: f(n) = 3n2 + 2n + 1000Logn + 5000 |

| 73 | + After ignoring lower order terms, we get the highest order term as 3n2 |

| 74 | + After ignoring the constant 3, we get n2 |

| 75 | + Therefore the Big O value of this expression is O(n2) |

| 76 | +

|

| 77 | + Example 2 : f(n) = 3n3 + 2n2 + 5n + 1 |

| 78 | + Dominant Term: 3n3 |

| 79 | + Order of Growth: Cubic (n3) |

| 80 | + Big O Notation: O(n3) |

| 81 | +--> |

| 82 | + |

| 83 | + |

2 | 84 |

|

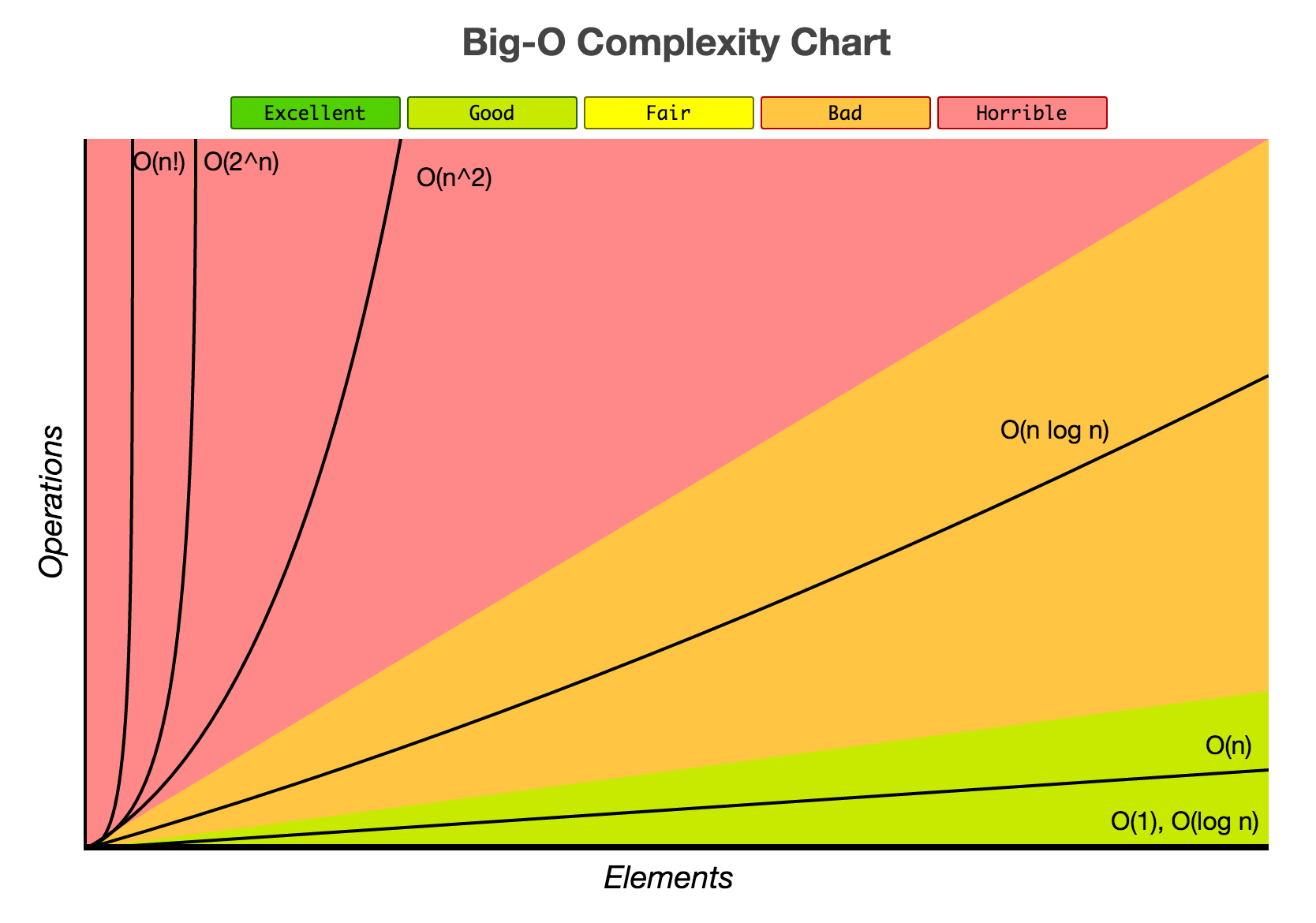

3 | 85 | Have a look at the [Big-O complexity chart](https://www.bigocheatsheet.com/): |

4 | 86 |

|

5 | 87 |  |

6 | 88 |

|

7 | | -A function [has an order](https://en.wikipedia.org/wiki/Big_O_notation#Orders_of_common_functions), it can be for example |

8 | | - |

9 | | -- constant (O(c)), |

10 | | -- logarithmic (O(log n)), |

11 | | -- linear (O(n)), |

12 | | -- [linearithmic](https://en.wikipedia.org/wiki/Time_complexity#Quasilinear_time) (O(n log n)), |

13 | | -- quadratic (O(n^2)), |

14 | | -- cubic (O(n^3)), |

15 | | -- exponential (O(c^n)), |

16 | | -- factorial (O(n!)). |

17 | 89 |

|

18 | 90 | This can make a *very* significant difference, as exemplified in the following code: |

19 | 91 |

|

20 | 92 | ``` |

21 | | -!include code/snippets/complexity.cs |

| 93 | +!include code/projects/GrowthMagnitudes/GrowthMagnitudes/Program.cs |

22 | 94 | ``` |

0 commit comments